InternDataEngine

High-Fidelity Synthetic Data Generator for Robotic Manipulation

About

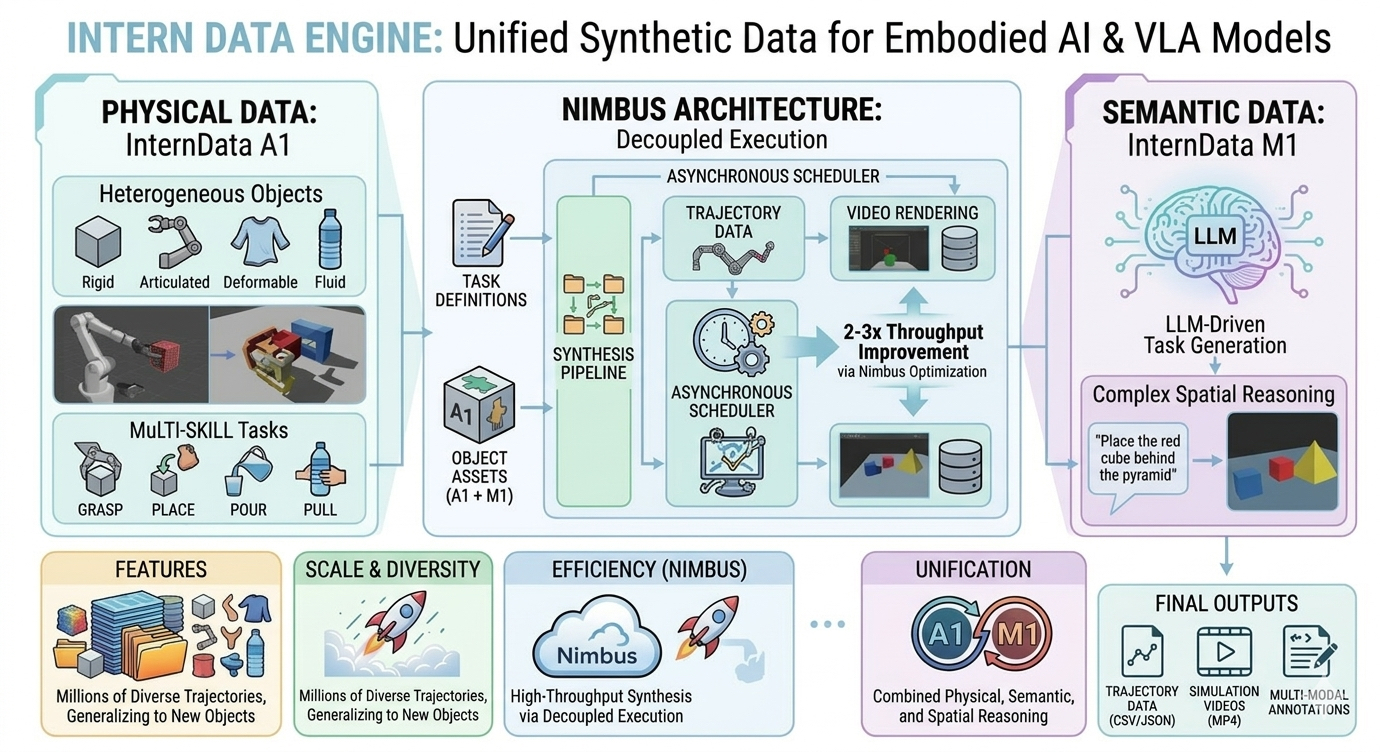

InternDataEngine is a synthetic data generation engine for embodied AI, built on NVIDIA Isaac Sim. It unifies high-fidelity physical interaction (InternData-A1), semantic task and scene generation (InternData-M1), and high-throughput scheduling (Nimbus) to deliver realistic, task-aligned, and scalable robotic manipulation data.

Key capabilities:

- Realistic physical interaction -- Unified simulation of rigid, articulated, deformable, and fluid objects across single-arm, dual-arm, and humanoid robots. Supports long-horizon, skill-composed manipulation for sim-to-real transfer.

- Diverse data generation -- Multi-dimensional domain randomization (layout, texture, structure, lighting) with rich multimodal annotations (bounding boxes, segmentation masks, keypoints).

- Efficient large-scale production -- Nimbus-powered asynchronous pipelines that decouple planning, rendering, and storage, achieving 2-3x end-to-end throughput with cluster-level load balancing and fault tolerance.

Prerequisites

| Dependency | Version |

|---|---|

| NVIDIA Isaac Sim | 5.0.0 (Kit 107.x) |

| CUDA Toolkit | >= 12.8 |

| Python | 3.10 |

| GPU | NVIDIA RTX (tested on RTX PRO 6000 Blackwell) |

For detailed environment setup (conda, CUDA, PyTorch, curobo), see install.md.

If migrating from Isaac Sim 4.5.0, see migrate/migrate.md for known issues and fixes.

Quick Start

1. Install

# Create conda environment

conda create -n banana500 python=3.10

conda activate banana500

# Install CUDA 12.8 and set up Isaac Sim 5.0.0

conda install -y cuda-toolkit=12.8

source ~/isaacsim500/setup_conda_env.sh

export CUDA_HOME="$CONDA_PREFIX"

# Install PyTorch (CUDA 12.8)

pip install torch==2.7.0 torchvision==0.22.0 torchaudio==2.7.0 --index-url https://download.pytorch.org/whl/cu128

# Install project dependencies

pip install -r requirements.txt

# Install curobo (motion planning)

cd workflows/simbox/curobo

export TORCH_CUDA_ARCH_LIST="12.0+PTX" # Set to your GPU's compute capability

pip install -e .[isaacsim] --no-build-isolation

cd ../../..

See install.md for the full step-by-step guide including troubleshooting.

2. Run Data Generation

# Full pipeline: plan trajectories + render + save

python launcher.py --config configs/simbox/de_plan_and_render_template.yaml

Output is saved to output/simbox_plan_and_render/ including:

demo.mp4-- rendered video from robot cameras- LMDB data files for model training

3. Available Pipeline Configs

| Config | Description |

|---|---|

de_plan_and_render_template.yaml |

Full pipeline: plan + render + save |

de_plan_template.yaml |

Plan trajectories only (no rendering) |

de_render_template.yaml |

Render from existing plans |

de_plan_with_render_template.yaml |

Plan with live rendering preview |

de_pipe_template.yaml |

Pipelined mode for throughput |

4. Configuration

The main config file (configs/simbox/de_plan_and_render_template.yaml) controls:

simulator:

headless: True # Set False for GUI debugging

renderer: "RayTracedLighting" # Or "PathTracing" for higher quality

physics_dt: 1/30

rendering_dt: 1/30

Task configs are in workflows/simbox/core/configs/tasks/. The example task (sort_the_rubbish) demonstrates a dual-arm pick-and-place scenario.

Project Structure

InternDataEngine/

configs/simbox/ # Pipeline configuration files

launcher.py # Main entry point

nimbus_extension/ # Nimbus framework components

workflows/simbox/

core/

configs/ # Task, robot, arena, camera configs

controllers/ # Motion planning (curobo integration)

skills/ # Manipulation skills (pick, place, etc.)

tasks/ # Task definitions

example_assets/ # Example USD assets (robots, objects, tables)

curobo/ # GPU-accelerated motion planning library

migrate/ # Migration tools and documentation

output/ # Generated data output

Documentation

- Installation Guide -- Environment setup and dependency installation

- Migration Guide -- Isaac Sim 4.5.0 to 5.0.0 migration notes and tools

- Online Documentation -- Full API docs, tutorials, and advanced usage

License and Citation

This project is based on InternDataEngine by InternRobotics, licensed under CC BY-NC-SA 4.0.

If this project helps your research, please cite the following papers:

@article{tian2025interndata,

title={Interndata-a1: Pioneering high-fidelity synthetic data for pre-training generalist policy},

author={Tian, Yang and Yang, Yuyin and Xie, Yiman and Cai, Zetao and Shi, Xu and Gao, Ning and Liu, Hangxu and Jiang, Xuekun and Qiu, Zherui and Yuan, Feng and others},

journal={arXiv preprint arXiv:2511.16651},

year={2025}

}

@article{he2026nimbus,

title={Nimbus: A Unified Embodied Synthetic Data Generation Framework},

author={He, Zeyu and Zhang, Yuchang and Zhou, Yuanzhen and Tao, Miao and Li, Hengjie and Tian, Yang and Zeng, Jia and Wang, Tai and Cai, Wenzhe and Chen, Yilun and others},

journal={arXiv preprint arXiv:2601.21449},

year={2026}

}

@article{chen2025internvla,

title={Internvla-m1: A spatially guided vision-language-action framework for generalist robot policy},

author={Chen, Xinyi and Chen, Yilun and Fu, Yanwei and Gao, Ning and Jia, Jiaya and Jin, Weiyang and Li, Hao and Mu, Yao and Pang, Jiangmiao and Qiao, Yu and others},

journal={arXiv preprint arXiv:2510.13778},

year={2025}

}